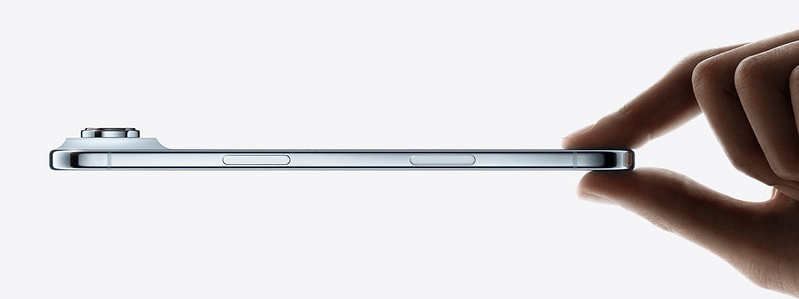

How does the recently announced iPhone Air make you feel? Look beyond its smaller battery or its single camera lens, and focus on what you feel when you see this quintessential Apple marketing image:

Apple wants it to feel futuristic and exciting, and seeing the beautifully crafted presentation of the iPhone Air brought me back to the days of Jony Ive’s voice-over Apple videos–a bygone era when design and excitement could be associated with an Apple product.

Continue reading “iPhone Air: compromise or innovation?”