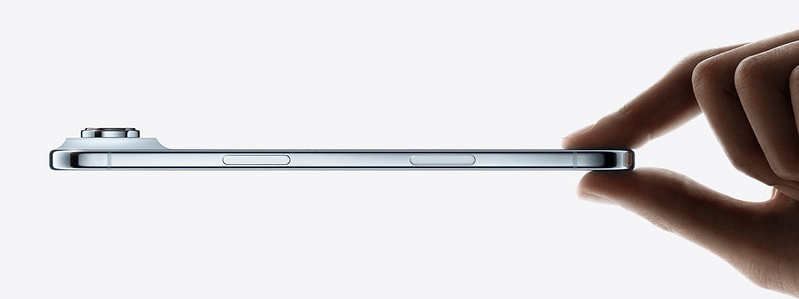

The iPhone Air needs a worthy companion, so it’s time for Apple to introduce the new Apple Watch Air: an elegant ultra-thin smartwatch focused on health.

Author: Ivan Rodriguez

iPhone Air: compromise or innovation?

How does the recently announced iPhone Air make you feel? Look beyond its smaller battery or its single camera lens, and focus on what you feel when you see this quintessential Apple marketing image:

Apple wants it to feel futuristic and exciting, and seeing the beautifully crafted presentation of the iPhone Air brought me back to the days of Jony Ive’s voice-over Apple videos–a bygone era when design and excitement could be associated with an Apple product.

Continue reading “iPhone Air: compromise or innovation?”Boox Color Go 7 tips and tricks

You just got a Boox Color Go 7, one of the most versatile e-readers on the market, and you would like to know how to configure it to optimize your experience. If that’s you, keep on reading to find the best tips and tricks to personalize your new e-ink tablet.

This post will cover how to configure it with its out-of-the-box software, installing as few apps as possible. This is the ideal setup for everyday users, and for those who want to get their device ready in a matter of minutes.

Continue reading “Boox Color Go 7 tips and tricks”AI gadgets of 2025: Black Mirror meets reality

When I recently wrote about the most noteworthy AI gadgets of 2024, I couldn’t help but wonder if the tech industry was nearing the limit of what’s possible with AI. But just a few weeks into 2025, the answer is already a resounding “no”—there is no limit in sight.

Indeed, what we can achieve with AI seems unbounded, as the recent CES 2025 event in Las Vegas proved. New AI products were unveiled, showcasing astonishing features and making unbelievable promises, practically taken out of a Black Mirror episode.

2024, a year of AI breakthroughs and backlash

2024 is drawing to a close, marking itself as one of the most influential and significant years in Artificial Intelligence (AI) history. Let’s take a moment to recap the changes that have defined this year, when AI transcended from a buzzword to become a product in itself.

We’ve dealt with failed gadgets, like the Humane Ai Pin. This wearable integrated a camera, a projector and a speaker into a magnetic pin. The gadget replicated some smartphone functionalities, and its sleek design offered a certain wow factor. But it did it all in the worst ways possible, forgetting usability and affordability.

Continue reading “2024, a year of AI breakthroughs and backlash”Unlock Pixel’s best AI features on your iPhone

With the recent launch of the iPhone 16 I thought it would be great to try and find workarounds to the AI feature gaps that iOS has, especially for people switching from an Android device.

Here’s how to set up an iPhone and get some of the most popular smarts from a Pixel phone: Circle to Search, Google Lens, Gemini and even Work profiles.

Continue reading “Unlock Pixel’s best AI features on your iPhone”AI: the good, bad, ugly and everything in between

ChatGPT gained mainstream popularity in 2023 and changed how Big Tech companies approached artificial intelligence (AI). This marked a major turning point in the AI Wars, a race between leading tech companies to develop the most advanced AI technology.

While AI has been studied and developed for decades, Large Language Models (LLMs) have managed to capture the consumer market’s imagination. Conversational and generative AI finally feels human-like, especially now that models are being trained using massive datasets.

Mainstream media has struggled to explain what LLMs are and what they can actually do, often focusing on the most alarming aspects of these AI models’ capabilities. Will AI eliminate our jobs? Will AI cause the end of the world? Will AI abuse your personal data?

Continue reading “AI: the good, bad, ugly and everything in between”3 features iPhone needs to steal back the show

Now that we are only 3 years away from the iPhone’s 20th anniversary, it’s easier to imagine what it will look like for its big birthday. The past few years have helped discard a few features that seemed more plausible just a few years ago.

Continue reading “3 features iPhone needs to steal back the show”Pixelbook: the ultimate Chromebook revival for 2024

I fell in love with the Pixelbook about two months ago. I saw a coworker at Google using one, and I was immediately impressed by its extreme thinness. The Pixelbook is as thin as two USB-C ports stacked. Why hadn’t I paid any attention to such a beautiful device until now?

Continue reading “Pixelbook: the ultimate Chromebook revival for 2024”Microsoft and ARM, a rocky romance

Microsoft announces new Surface devices! Their light form factor blends the experience from a traditional laptop with a tablet. A new Windows version, especially built for low-power ARM processors, promises security and performance improvements. It gives users access to their favorite Windows apps with an all-day battery life. Microsoft believes these 2-in-1 gadgets can finally become their Apple-killer competitors.

You’d think that was referring to the new Surface devices announced at Microsoft’s Copilot+ PC event earlier this month. Or perhaps you thought that was referring to the Surface Pro X, launched in 2019. But in fact, it was actually talking about the Surface RT, launched in 2012.

That’s right, the Copilot+ PC launch is Microsoft’s third attempt to usher a new generation of Windows devices using the ARM architecture. What happened in the previous two?

Continue reading “Microsoft and ARM, a rocky romance”